NeuroSkill™

According to a report from Menlo Ventures there were about 1.7-1.8 billion people who used large language model (LLM)-based systems, with about 600 million people who use LLMs daily.

Recent success of autonomous agents showed that there is demand for LLM harnesses that are unprecedented in their intent and their agency.

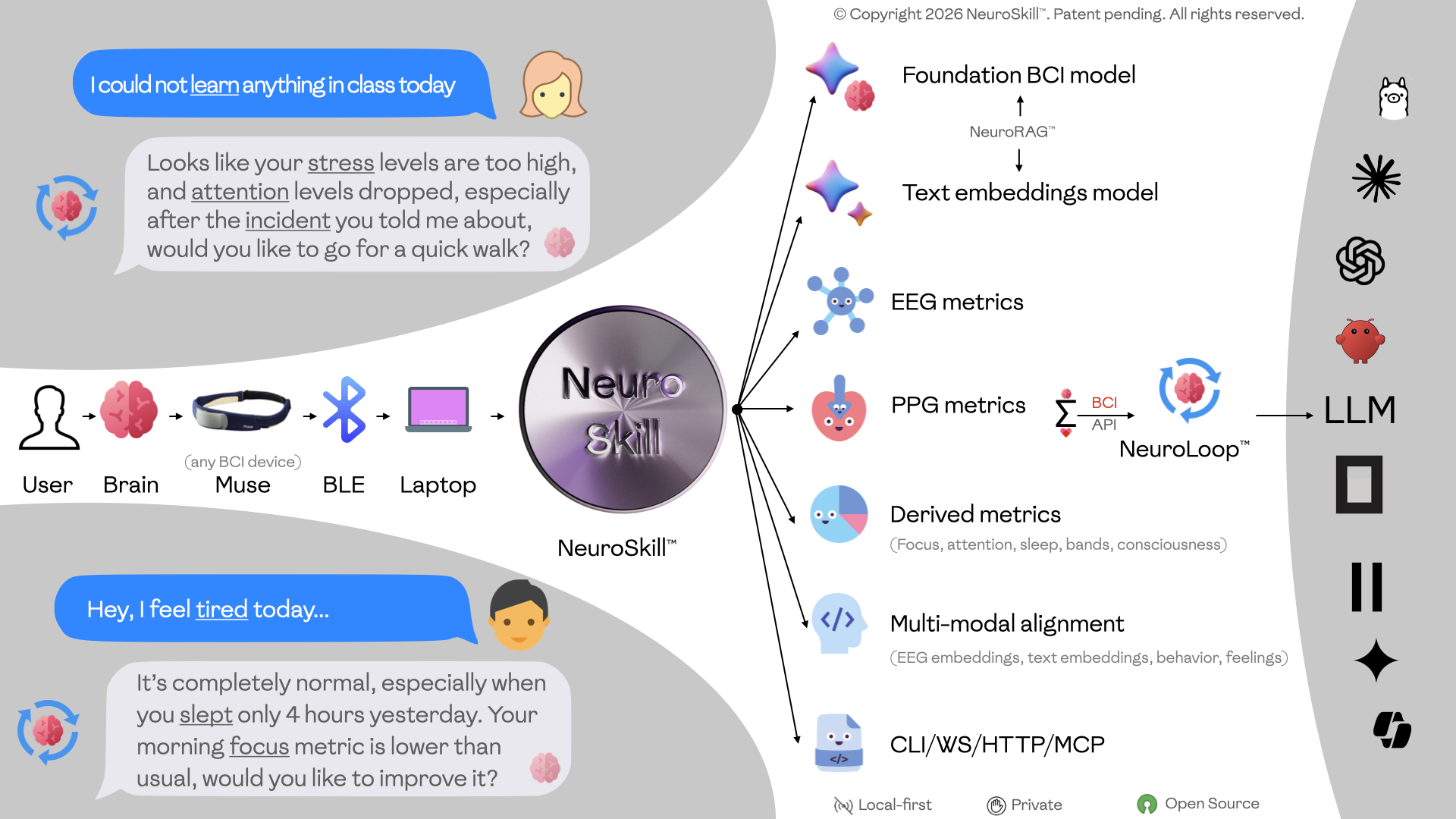

While systems such as OpenClaw aim to provide autonomy to agents, this work explores a different dimension of that relationship: how an agent can understand what a Human feels, what a Human does, and how a Human’s experiences shape their behavior – ultimately influencing the interaction and communication between the agentic system and the Human.

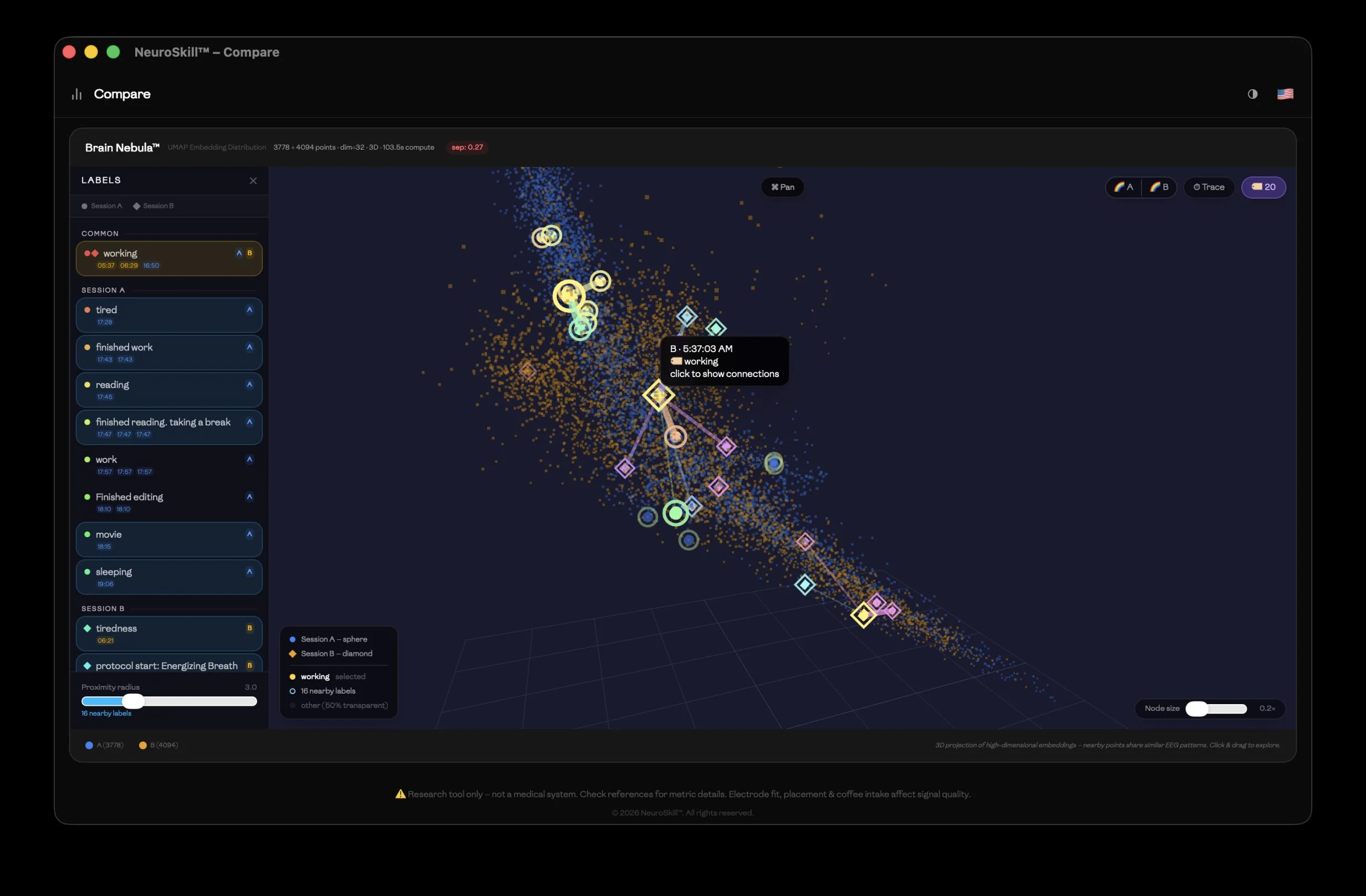

In this work we present a novel system that is designed to build foundational representation of the brain states provided by the Human for the Agent to index, align, search, and navigate. In most cases it is done in real-time and on the edge (your local personal computer), while engaging with the Human; in other cases – to perform deep sleep research while Human is not in an active conscious state.

We offer a simple set of markdown files backed by the Application Programming Interface (API) and Command Line Interface (CLI) interfaces we released, using open source license GPLv3, that allows the LLM harness to execute the agent, and allows agent to engage with a new set of biophysical data that carefully models Human State of Mind, behavior, emotions, social cues, relationships, and anything the user can put in writing, via voice, or even when the user does not have to or cannot do it: they might just feel it, think it, imagine it, or experience it.

We call this system NeuroSkill™.

It encapsulates the app that always runs and collects data from the Brain-Computer Interface (BCI)-enabled wearable, non-invasive devices (the system can be extended to ingest data from any invasive and noninvasive device available on the market today). The access is provided via an API npx neuroskill <command>, and can be edited as described in the published markdown SKILL.md files.

The LLM harness we designed is called NeuroLoop™.

It is a unique implementation, because it relies not only on the tools and skills provided to the system, but it parses in real-time whether additional data needs to be requested, aligned, or generated. As a result, it proactively adjusts its recommendations for the Human behavior to align with the goals the Human initially sets up as the Agent’s main objective.

The proposed system is highly adjustable and modifiable. A Human with no coding skills can easily extend it by merely providing new markdown files and explaining in plain English how they want the agent to use them inside the NeuroLoop™.

Motivations

Emerging research raises concerns about the cognitive and societal implications of LLM usage. The inception of agentic systems offers more flexible interfaces than expensive LLM fine-tuning and greater versatility than Retrieval-Augmented Generation (RAG) systems.

Mass adoption of wearable devices continues to increase year over year. Affordable EXG systems like Muse, OpenBCI or AttentivU make consumer-grade BCI devices ubiquitous by design.

Big tech corporations collect vast datasets from users of their devices such as the Apple Watch, Samsung Galaxy Watch, Fitbit, Oura, and others. Very few of these datasets are published or actively used to benefit the very individuals from whom they were collected.

With brain data, the risk–benefit model is different, and as a species, we cannot afford to let BCI innovation be concentrated in the hands of a few.

Our goal in publishing this work is to encourage neuroscientists, developers, caregivers, students, and others to view the value of their brains differently and to make their own brains work for them.

We open-source the full implementation of the system so anyone can use it.

Even better, the NeuroLoop™ system itself can help its user modify it and its source code, making it highly tailored to the user’s needs — all without violating the user’s privacy, rights, or dignity.

Recognizing that this is the first system of its kind — built on decades of scientific and open‑source effort by humanity — we welcome feedback and aim to contribute to a better future for Brain‑Computer Interfaces.

Research and Publications

NeuroSkill™: Proactive Real-Time Agentic System Capable of Modeling Human State of Mind

Nataliya Kosmyna, Eugene Hauptmann. 2026. (Version 0.0.1) [Software]. https://arxiv.org/abs/2603.03212

NeuroSkill™ Website

Check out the project’s website to learn more and download it.